Time to First Token, or TTFT, is the pause between sending a prompt and seeing the first word come back. In most AI products, that's the delay users feel first, judge first, and remember first.

That makes hardware a latency decision, not only a cost or throughput decision. If you're building chat, voice, or agents, the question isn't only "how many tokens per second can this chip push?" It's "how quickly can it start?"

What TTFT really measures, and why users notice it straight away

TTFT is easy to explain and easy to miss. It starts when your app sends the request and ends when the first output token arrives at the client. A practical definition from Deepchecks' TTFT guide matches what product teams care about, the moment the UI can show that the model has begun to answer.

That first moment matters more than many teams expect. A model can stream fast once it starts, yet still feel slow if the opening pause drags. That's why TTFT is not the same as total latency, and it's not the same as throughput. Throughput tells you how fast tokens flow after the engine is already running. TTFT tells you how long the engine takes to cough into life.

The parts of inference that shape first-token latency

Several things stack up before the model emits a single token. Prompt processing, often called prefill, can dominate if your context is long. Model warm-up can hurt if weights or kernels aren't ready. Memory access matters because big models spend much of their time waiting for data, not maths.

Then there are software costs. Kernel launches add overhead. Batching can hold a request in the queue whilst the system waits to fill a batch. Data movement across PCIe, network links, or disaggregated memory adds more delay. Production metrics guidance for TTFT and queue time makes the point clearly, perceived responsiveness is usually shaped by prefill time and queue wait, not raw compute alone.

Why TTFT feels different in chat, voice, and agents

A 300 ms wait in an offline summarisation job is irrelevant. The same wait in a voice assistant feels sticky. At 600 ms, people start to hesitate. At 1 second, they wonder whether the system heard them at all.

Users don't judge "fast" by the last token. They judge it by the first one.

This is why TTFT has become the frontline metric for assistants, copilots, and streaming agents. The first response sets trust. Everything else follows it.

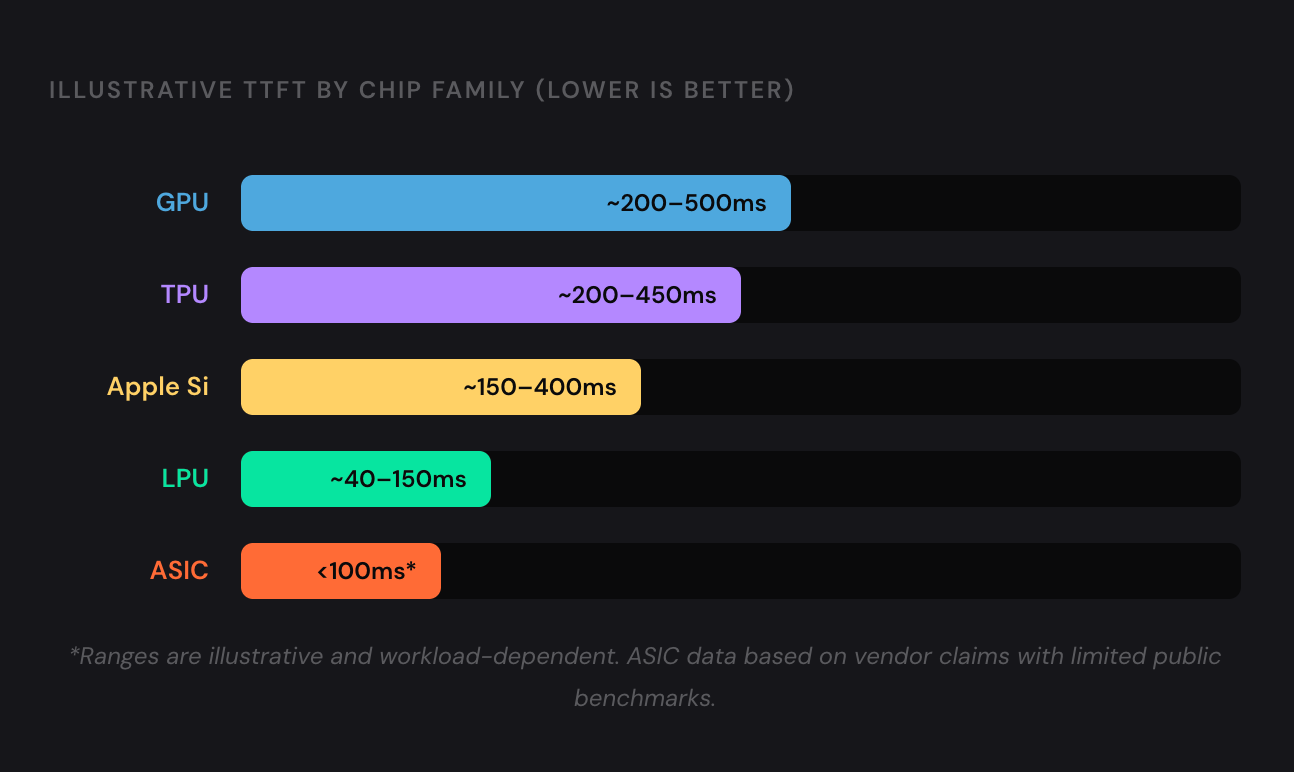

How each chip family handles low latency in practice

Different chips attack the same problem from different directions. Some optimise flexibility. Some optimise compiler control. Some try to cut the memory wall by design. Here is the practical picture for inference.

| Chip family | TTFT profile | Main trade-off | Best fit |

|---|---|---|---|

| GPU | Often good, sometimes held back by batching and launch overhead | Flexible, but not always lowest first-token latency | General-purpose serving, mixed workloads |

| TPU | Efficient in managed stacks | Less universal outside Google's stack | Large-scale controlled deployments |

| LPU | Excellent, predictable low latency | Narrower model and deployment fit | Real-time AI, voice, interactive agents |

| Apple Silicon | Strong for local inference and small models | Not a datacentre-first option | On-device AI, local dev, edge |

| Taalas / Fractile-style ASICs | Designed to slash memory movement | Early ecosystem, limited public TTFT data | Specialised low-latency inference |

The short version is simple. GPUs win by being good at almost everything. LPUs and emerging ASICs win when first-token delay is the product requirement.

GPUs, flexible and fast, but not always the quickest to first token

GPUs still run most inference stacks for good reasons. They have huge memory bandwidth, mature runtimes, strong framework support, and a supply chain people know how to buy. They also scale well across clusters.

But GPU software stacks carry overhead. Kernel launches, scheduler behaviour, queueing, and aggressive batching can push TTFT up, even when total tokens per second look excellent. A useful GPU versus TPU transformer benchmark shows why GPUs often win small-batch inference and latency-sensitive work, whilst other accelerators can catch up when sequence lengths and batch sizes climb.

TPUs, efficient for managed workloads, but less universal for inference latency

TPUs are strong when the environment is controlled. Google has built them for machine learning at scale, with tight integration across compiler, runtime, and infrastructure. That can make them efficient for large deployments and predictable service patterns.

For TTFT, the picture is less clear in public data. Available May 2026 benchmark snapshots show respectable inference performance, but much less public TTFT detail than you get for GPUs or Groq-style hardware. That doesn't make TPUs slow. It means the public comparison is thin, especially outside Google Cloud-centric setups.

LPUs and Groq-style designs, built for deterministic speed

LPU-style silicon is the cleanest latency-first argument in this whole market. Pre-planned execution reduces jitter. Predictability matters because real-time systems hate variance almost as much as they hate high averages.

Current public benchmark summaries point in the same direction. Groq's LPU shows very strong low-variance behaviour for real-time workloads, and live latency benchmark coverage has pushed that point into the open. The trade-off is flexibility. Special-purpose hardware can be brilliant for selected models and serving patterns, yet less forgiving when your stack changes every week.

Apple Silicon for local AI, efficient at the edge, but not a datacentre latency king

Apple Silicon is the interesting outsider. Unified memory reduces copying. Power efficiency is excellent. Local inference on smaller or quantised models can feel snappy, especially for developers and edge use cases.

The catch is scale. Apple Silicon is not the usual answer when a platform team is chasing multi-tenant datacentre TTFT under load. Still, for private assistants, demos, and local tooling, it punches above its weight. One M4 Pro native compute report recorded low TTFT in a local setup, but those results depend heavily on model size, quantisation, and the Metal or MLX path used.

Taalas model-specific silicon that attacks the memory wall

This is where things get more radical. Taalas is pushing the idea that trained models should be turned into hardware, not merely run on it. That cuts data movement and strips away much of the general-purpose baggage that slows inference. Its HC1 technology demonstrator claims very high per-user inference speed and positions low-latency serving as the core point, not a side effect.

Fractile, a UK start-up in the same broad conversation, is associated with a memory-centric approach for low-latency LLM serving. Public May 2026 TTFT benchmarks are still sparse, so caution matters here. The design thesis is still worth watching, though: if memory traffic is the bottleneck, then silicon that keeps weights close and movement low has a real shot at cutting first-token delay. Anthropic is in talks to buy AI inference chips from UK startup Fractile.

TTFT versus throughput, the trade-off behind every infrastructure choice

The fastest chip to first token is not always the best business decision. A large GPU fleet can be cheaper per token when requests batch well and utilisation stays high. That's why GPUs remain dominant.

When throughput wins and when it does not

If you're running bulk summarisation, nightly document processing, or asynchronous classification, throughput is king. A few hundred extra milliseconds at the start hardly matters if cost per million tokens drops.

Batching is the usual trick. It lifts efficiency, but often at the expense of TTFT because requests wait for company. That is fine for background work. It is bad for live assistants.

Where low-latency silicon changes the economics

Voice changes the maths. So do streaming agents and co-pilots embedded in active workflows. In those systems, lower TTFT reduces awkward silences, cuts perceived lag, and can stop users bailing out of the interaction.

That means deterministic latency can beat peak throughput. A chip that starts in 80 ms every time may create more product value than one that starts in 400 ms but wins on long-run tokens per second.

Choosing the right hardware for the workload, not the hype

This is where many buying decisions go wrong. Teams compare chips in the abstract, then deploy them into workloads that punish the wrong metric. Hardware choice should follow prompt size, model size, concurrency, latency target, and cost per request.

Chatbots and copilots that need fast, but not instant, replies

Most text chat products can live happily on GPUs. If your assistant is typing back in a web UI, a modest TTFT is often acceptable, especially when elasticity and mature tooling matter more than shaving every millisecond.

Voice, audio, and real-time assistants need the lowest TTFT

Spoken systems are harsher. Humans notice pause and overlap in conversation immediately. If you build call-centre copilots, live interpreters, or speech-driven agents, ultra-low TTFT is not a nice extra. It's core product quality.

Streaming agents and interactive tools need predictable latency

Agent workflows often stream partial answers, call tools mid-response, and coordinate downstream services. In that setup, consistency matters as much as raw speed. Low-variance hardware keeps orchestration clean and user experience stable.

Batch inference still belongs on the cheapest efficient stack

Not every job needs sub-second starts. Bulk enrichment, offline analytics, scheduled evaluation, and large document pipelines can stay on GPU-heavy or other cost-optimised stacks. Spending extra for ultra-low TTFT there is usually waste.

Why most organisations will end up with mixed compute, not a single winner

No serious platform should bet everything on one chip family. Supply constraints change. Prices move. Vendor lock-in gets expensive. Data residency rules complicate placement. The practical answer is heterogeneous compute under one control plane, with Kubernetes doing the routing, policy, and isolation work.

Routing work to the right chip based on latency needs

A sensible platform sends low-TTFT traffic to latency-first hardware and leaves high-throughput work on GPUs. That could mean voice requests on LPUs or specialist ASICs, chat on GPUs, and local private workflows on Apple Silicon at the edge.

If you're planning that architecture, it helps to Book a Meeting with our Infra Experts. The hard part is not naming the best chip. It's wiring scheduling, placement, and policy so the right request lands on it every time.

The limits of single-vendor thinking in AI infrastructure

Single-vendor stacks are simple until they aren't. Outage exposure rises. Capacity gets tight. Compliance can force workloads into specific regions or on-prem estates. A portable compute strategy gives you room to move without rewriting the whole serving layer.

For most teams, that means pooling GPU, TPU, LPU, Apple Silicon, and newer ASIC capacity where it makes sense, then making placement a policy decision rather than a procurement accident.

Conclusion

TTFT is the metric users feel first, and hardware shapes it more than most teams admit. GPUs still own a huge share of inference because they balance flexibility, scale, and throughput well. But they are not the automatic winner when the product lives or dies on the first 100 ms.

The stronger pattern is mixed compute. Put voice, audio, and real-time assistants on the fastest low-latency hardware you can justify. Keep throughput-heavy jobs on GPUs where economics still work.

The future is not choosing one chip. It's orchestrating many, intelligently, at scale.